Experimentation @ iTech Media: From startup to multiple agile teams.

An experimentation operational system in detail.

Welcome to an evergreen Positive Experiments issue 🎉

This is an aggregate of all material created to formalize iTech Media’s Experimentation Operational System.” This post will evolve as the team grow, learn, and receive feedback.

Last updated March 26th, 2022.

Five questions answered

What problem is the Operational System solving? What was the root cause?

Why do we run experiments in iTech Media?

What are the different workflows?

What type of skills are required to implement each workflow?

How did change management happen?

What is the outcome?

1. What problem is the Operational System solving?

iTech Media’s operational system is one piece of the experimentation building block that we kept building parallel as we moved with other areas of our experimentation flywheel.

In his 1000 experiments club participation, Jonny Longden explains the concept better than we could.

—

There are building blocks to experimentation:

Strategy — What’s the plan? If experimentation is set to improve performance, what does performance mean for the organization?

Tools & Technology — Do you have access to the right data, tools, and technologies to understand the performance strategy? Have you got the right stack for testing?

Processes — Do you have the proper methods and ways of working to pass those things into production in a sustainable and scalable way?

People — Do you have the right skills to manage and properly use tools and technologies?

Structure — Does the current org setup facilitate and have the right incentives in place for the process to be executed with excellence?

Interestingly, many will talk about the culture of experimentation — yes, you have to have the right mindset for the organization.

However, you can't quite get there unless you build these building blocks in parallel.

Those two must go hand in hand.

Growing Pains

Experimentation and its foundations like they're very hard to criticize. If you think about it logically, everybody would agree that making changes and measuring the impact is mandatory. This is on paper though, in reality is not that easy.

— Andrea Corvi, Sr. Experimentation Manager @ iTech Media

When Andrea Corvi joined iTech, they were less than 50 people.

Everybody was really on board with experimentation and running tests was a breeze.

Growth brought new challenges.

There’s a lot written on creating a culture of experimentation for businesses but very little material on what it takes to maintain the culture as a company grows.

Root cause analysis and requirements

We’ve done a lot of work to identify what we needed to maintain the experimentation program and reach higher maturity.

We agreed that we needed:

With more people joining every month and an ever-growing product organization:

A common language helps communicate plans and strategies while facilitating reporting standardization up and down the chain of command;

A clear remit eliminates ambiguity on how Product can leverage Experimentation expertise;

A scalable way of working avoids having to come back and refine the plan after we 2x the headcount;

Alignment with Experimentation and Analytics gives purpose and direction to where we’re going and how we help the business.

2. Why do we run experiments in iTech Media?

🔎 To measure the impact of product changes

🙅🏽♂️ To reduce risk by minimizing damage and test delivery costs

🤓 To learn about users’ reactions to a new experience.

To measure impact, reduce risks, and learn.

That’s why to experiment.

3. What are the different workflows?

Different activities we are responsible for are called streams.

There are two types of streams:

ACTIVE: different experiment types we run and support.

SUPPORT: advocacy and research are recurrent activities, tailwinds to the experimentation program.

Active streams explained with a story

We collected multiple experiments representing each stream in action for our internal teams to connect theory and practice.

We explained how streams work with a product scenario at Experiment Nation UnConference 2021.

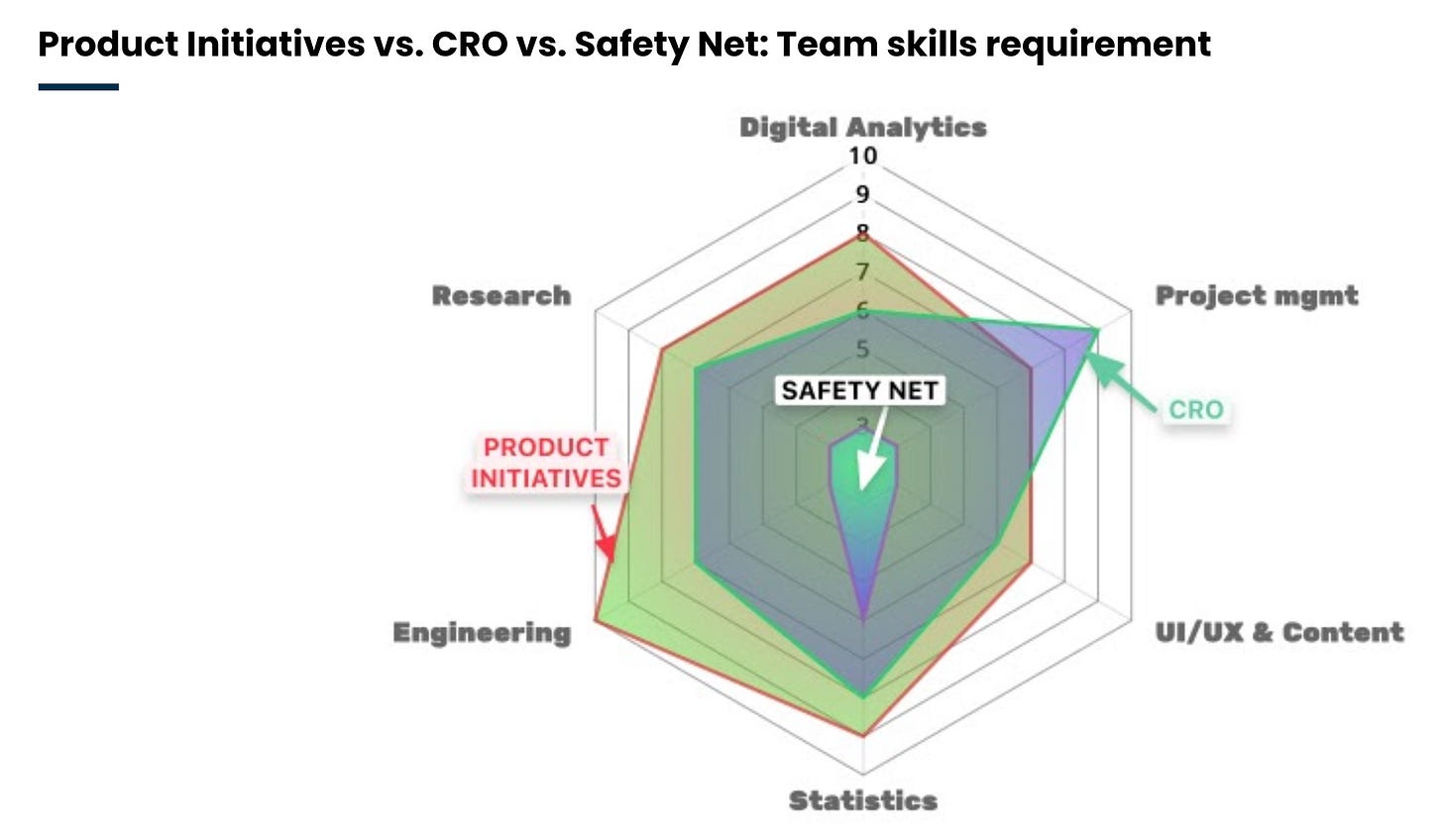

4. What type of skills are required to implement each workflow?

With the streams in place, they often coach Product Managers and General Managers on the best setup for having them at fullest capacity.

Inspired by Johnny Longden's explanation of skills needed to build an experimentation function, we developed our flavor of his skill map (original article here).

Mapping the dream team skills setup for each stream became the best visual representation to guide product leaders.

Andrea explains the reasoning and how we communicated with product leaders in more detail below.

How did change management happen?

The team went on a tour.

After leadership's buy-in, products that use the experimentation platform were onboarded in the new system. The primary audiences were Product Managers and Delivery Leads.

The team made sure to clarify how:

each stream is defined

program metrics are monitored

operations translate to the new system.

Streams became a naming convention for a new dimension in our Experimentation Library.

Each experiment scorecard has a stream tag, and it facilitates reporting.

Performance per stream became part of our regular communications via monthly and quarterly newsletters — good metric to follow.

What is the outcome?

Feedback across the board is better reporting structures. For example, there was no consistency for segmenting overall program performance, now that has become possible, and data is helping the team to prioritize

Hiring became more assertive and job descriptions more specific.

Product OKR planning started considering stream activity.

If you’ve made it this far, I believe you will get value from subscribing.

I dedicate 10 hours so you can read it in about 10 minutes.

Two issues per month packed with value.